Equinix Accelerates Enterprise AI Workloads with Fabric Intelligence

Equinix Accelerates Enterprise AI Workloads with AI-Native Networking Intelligence

Equinix Accelerates Enterprise AI Workloads at a moment when enterprises are no longer constrained by AI ambition—but by infrastructure readiness. While models, agents, and inference capabilities are advancing rapidly, the underlying network layer remains rigid, manually managed, and fundamentally misaligned with the demands of distributed intelligence systems.

At a structural level, this creates a growing disconnect between AI velocity and infrastructure capability. AI systems require real-time responsiveness, low-latency interconnections, and dynamic scaling across environments. Traditional networks, designed for predictable workloads, struggle to keep pace.

From a CX standpoint, this translates into slower feature rollouts, inconsistent application performance, and increased downtime risks. This becomes critical when customer-facing AI systems—recommendation engines, copilots, or autonomous workflows—fail to deliver expected responsiveness.

The deeper implication is clear: infrastructure is no longer a backend utility. It is becoming a determinant of experience quality.

Why Legacy Networks Are Failing AI

Traditional networking architectures were built around static provisioning, human-driven configuration, and reactive monitoring. These assumptions break down entirely in the context of distributed, real-time AI environments.

Manual workflows introduce latency not just in execution, but in decision-making. Deployment cycles stretch into weeks, while AI systems iterate in hours or minutes. Visibility gaps further compound the problem, making it difficult to diagnose issues across multi-cloud and edge environments.

This becomes critical when enterprises deploy agentic AI systems that autonomously interact, learn, and execute tasks. These systems demand continuous adaptation, not periodic updates.

“The whole concept of AI is to make processes faster, and manual processes for network monitoring and management are difficult, if not impossible, to scale effectively.” — Jim Frey, Principal Analyst, Omdia

The deeper implication is that the industry is moving from software-defined networking to AI-defined networking—where telemetry is continuously interpreted and acted upon by intelligent systems.

This is the context in which Equinix Accelerates Enterprise AI Workloads becomes strategically inevitable.

From Infrastructure to Intelligence Layer

Strategically, Equinix is repositioning itself beyond its traditional role as a digital infrastructure provider into an AI-native orchestration layer for enterprise networks.

Fabric Intelligence represents a shift from:

- Managing infrastructure → Delegating decisions to AI systems

- Static configurations → Dynamic, adaptive optimization

- Operational overhead → Autonomous execution

This is where the shift occurs. By embedding AI directly into the control plane, Equinix is moving up the value chain—from providing capacity to delivering intelligence-driven infrastructure outcomes.

“All enterprises are focused on leveraging AI to transform their business, but most lack the infrastructure needed to deploy it at scale…” — Jon Lin, Chief Business Officer, Equinix

Strategically, this indicates a clear intent:

- Own the orchestration layer of multi-cloud networking

- Abstract complexity through AI agents and natural language interfaces

- Convert infrastructure into a competitive advantage rather than a constraint

The deeper implication is that infrastructure providers are evolving into platform players in the AI economy.

Competitive Positioning in the AI Infrastructure Race

The competitive landscape around AI infrastructure is fragmented across hyperscalers, colocation providers, and network vendors. Each brings a partial solution, but none fully bridge the gap between global infrastructure scale and AI-native orchestration.

Hyperscalers offer integrated AI and networking capabilities, but often within walled ecosystems, limiting flexibility. Traditional infrastructure providers deliver physical scale but lack intelligent automation layers. Network vendors bring automation, but without global interconnection ecosystems.

Equinix’s differentiation lies in combining:

- A global footprint of interconnected data centers

- A neutral, multi-cloud ecosystem

- An AI-native operational layer

This hybrid positioning allows Equinix to function as a neutral intelligence fabric across clouds, edge, and enterprise environments.

This is where Equinix Accelerates Enterprise AI Workloads stands out—not as an incremental upgrade, but as a structural bridge across fragmented infrastructure layers.

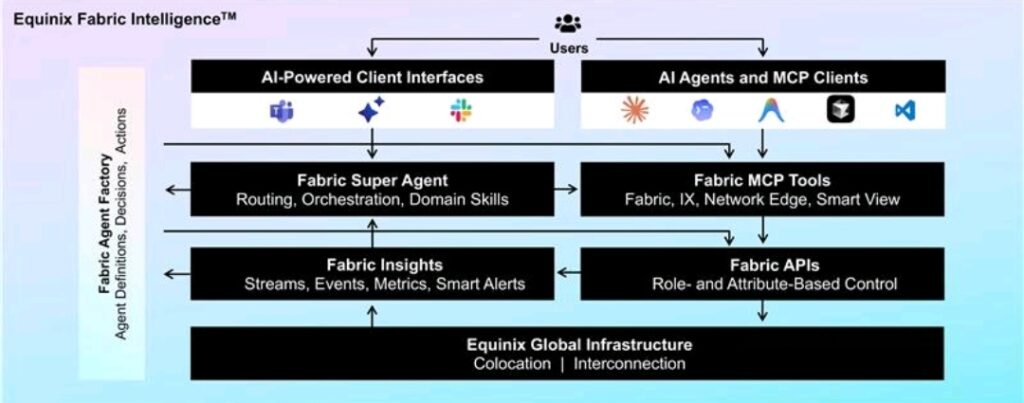

Inside Fabric Intelligence

Fabric Intelligence operates as an AI-native control layer that orchestrates networking across distributed environments.

Its architecture includes:

- Fabric Super Agent

An AI superagent enabling users to design, deploy, and manage networks using natural language. This significantly reduces deployment timelines from weeks to minutes and removes dependency on specialized interfaces or APIs. - MCP Server

A framework enabling integration with AI development tools such as Codex, Copilot, and other agent-based systems. This bridges developer workflows directly with network operations. - Fabric Application Connect

A private connectivity marketplace that allows enterprises to securely access AI service providers without exposing sensitive data to the public internet. - Fabric Insights

A predictive monitoring system that analyzes real-time telemetry to detect anomalies and maintain network health proactively.

Operationally, this translates into autonomous provisioning, continuous optimization, and predictive maintenance.

This becomes critical in managing AI workloads that span multiple environments simultaneously. The deeper implication is that networks evolve from being configured systems to self-learning systems.

CX Becomes Infrastructure-Driven

From a CX standpoint, the transformation is profound because it redefines where experience quality is created.

Customer Layer

Users experience faster application response times, fewer disruptions, and more consistent service delivery.

Business Layer

Organizations benefit from reduced operational costs, faster time-to-market for AI-driven services, and lower reliance on highly specialized talent.

System Layer

Infrastructure becomes adaptive, capable of responding to real-time conditions, predicting failures, and optimizing performance continuously.

This becomes critical as enterprises compete not just on product features, but on experience velocity—how quickly they can deliver value to customers.

The deeper implication is that network intelligence becomes a direct driver of customer satisfaction metrics, including latency, uptime, and responsiveness.

This is where the shift occurs: CX is no longer application-centric—it is infrastructure-centric.

Maturity and Enterprise Readiness

Fabric Intelligence represents an advanced, predictive CX maturity level, where systems not only respond to issues but anticipate and prevent them.

However, enterprise readiness varies. Many organizations still operate with legacy processes, siloed teams, and governance models not designed for autonomous systems.

The gap lies in:

- Organizational readiness for AI-driven decision-making

- Integration of AI agents into operational workflows

- Trust in automated systems for critical infrastructure

The trigger for adoption will be the need to scale distributed AI workloads efficiently and reliably.

Decision Lens for Enterprises

For enterprises evaluating their AI infrastructure strategy, the decision framework is shifting.

- Build → High cost, long timelines, limited scalability

- Buy → Faster deployment but constrained flexibility

- Partner (Equinix) → Balanced approach with scalability, speed, and reduced complexity

Risk levels remain moderate, primarily driven by dependency on AI maturity and integration complexity. Implementation complexity is also moderate to high, given the need to align systems, processes, and talent.

However, the upside is significant: faster deployment cycles, improved operational efficiency, and enhanced CX outcomes.

Industry Shift Toward Autonomous Infrastructure

The introduction of AI-native networking accelerates broader industry shifts:

- Talent Transformation

Demand moves from traditional network engineers to AI infrastructure operators and automation specialists. - Competitive Dynamics

Infrastructure providers without AI capabilities risk becoming commoditized. - Ecosystem Evolution

Open standards and interoperable AI agents gain importance, enabling collaborative innovation across platforms.

This becomes critical as enterprises demand modular, interoperable infrastructure ecosystems that can evolve with their AI strategies.

The Future of AI Infrastructure

Looking ahead, infrastructure is evolving toward systems that are:

- Self-managing

- Self-optimizing

- Experience-aware

This represents a fundamental redefinition of infrastructure—from passive enabler to active participant in business outcomes.

Equinix Accelerates Enterprise AI Workloads not just by improving networking efficiency, but by introducing a new paradigm where infrastructure continuously learns, adapts, and aligns with business and CX objectives.

Key Takeaways

- AI adoption is now constrained by infrastructure, not models

- Networks are evolving into intelligent, autonomous systems

- CX outcomes are increasingly tied to infrastructure performance

- AI-native orchestration is becoming a competitive necessity

- Equinix is positioning itself as a control plane for the AI-driven enterprise

The post Equinix Accelerates Enterprise AI Workloads with Fabric Intelligence appeared first on CX Quest.

You May Also Like

Analysts Say Don’t Panic: Micron and Sandisk Are a Buy After the Google TurboQuant Selloff

ArtGis Finance Partners with MetaXR to Expand its DeFi Offerings in the Metaverse