IVLMap Bridges the Sim-to-Real Gap in Robot Navigation

Table of Links

Abstract and I. Introduction

II. Related Work

III. Method

IV. Experiment

V. Conclusion, Acknowledgements, and References

VI. Appendix

\

VI. APPENDIX

APPENDIX

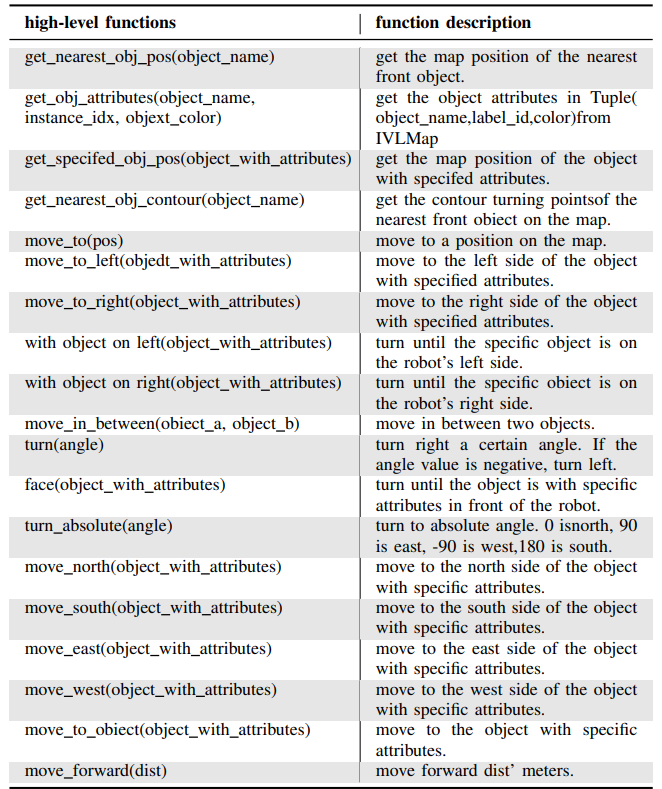

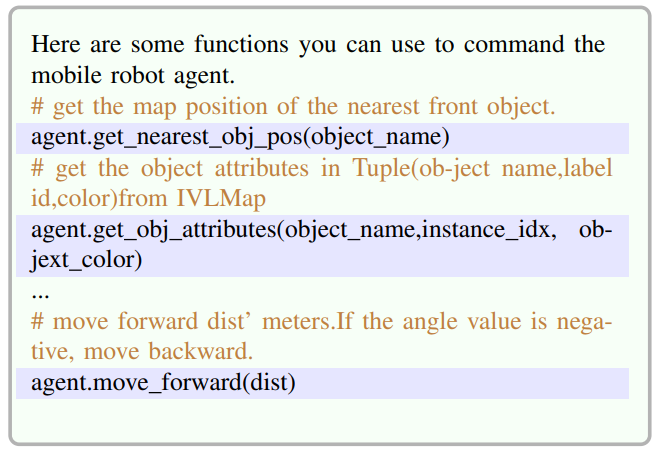

A. Full List of Navigation High-Level Functions

\ On the basis of VLMap, we have developed a new high-level function library based on the distinctive features of IVLMap. Our full list of navigation high-level functions are listed in Table. III

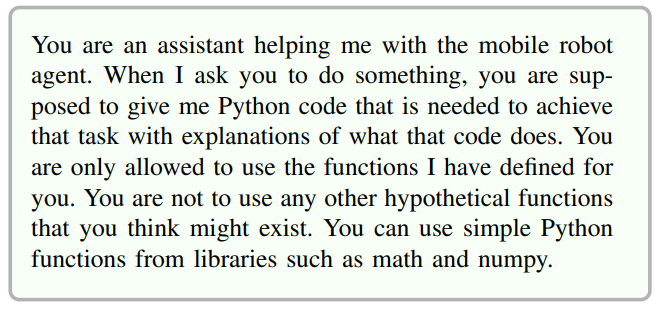

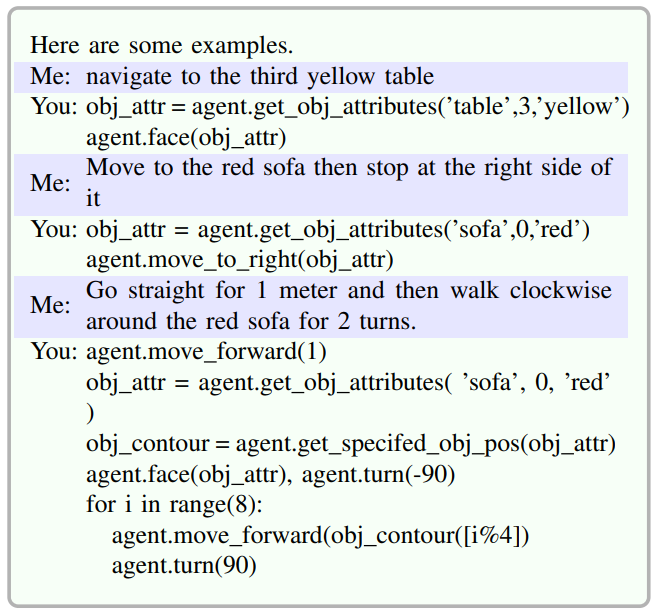

\ B. Full Prompts for LLM Generating Python Code

\ system_prompt

\

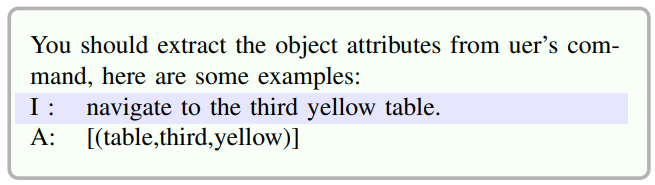

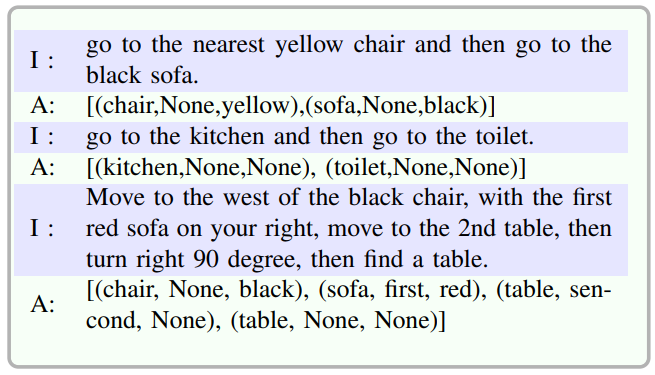

\ attributes_prompt

\

\

\ function_prompt

\

\ The omitted function prompt can be referred to in Table III.

\ example_prompt

\

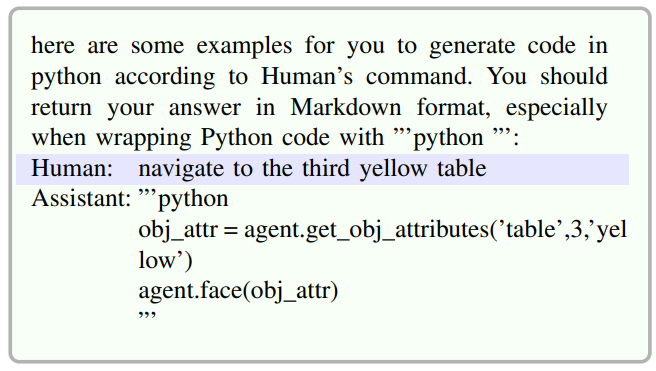

\ gencodeprompt

\

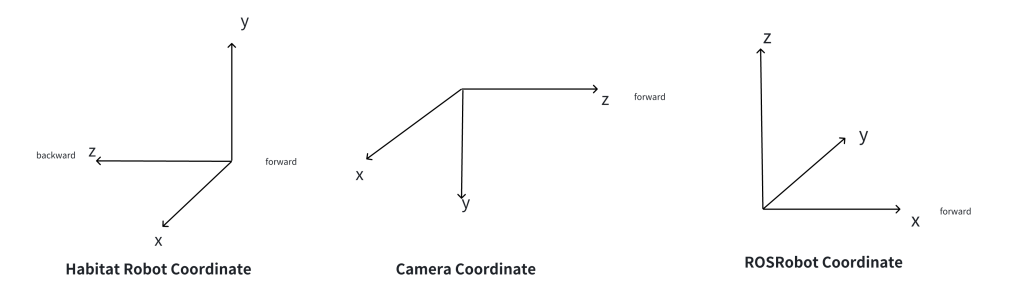

\ C. Experiment on Real Robot

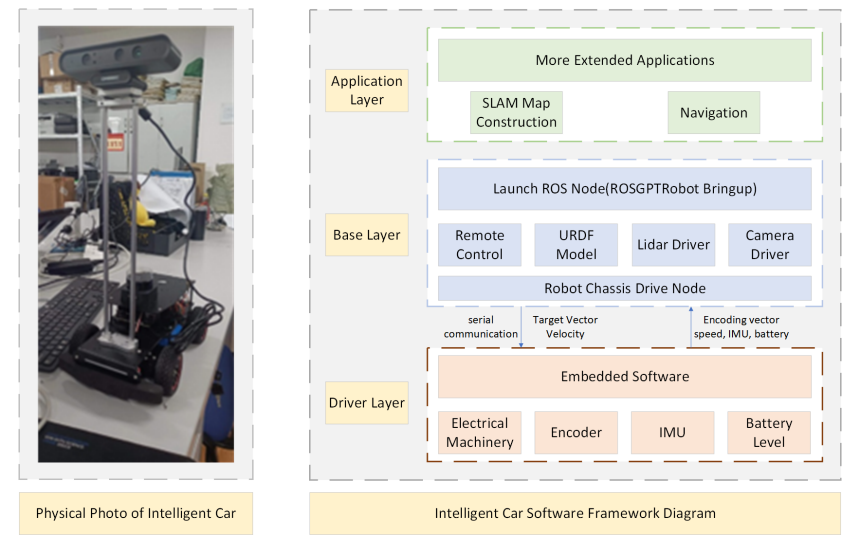

\ ROS-based Smart Car Real-World Data Collection Scheme: In current research on visual language navigation, the majority of work is implemented using simulators such as Habitat and AI2THOR, achieving notable results in virtual environments. To validate the effectiveness of the algorithm in real-world scenarios, we conducted corresponding experiments in actual environments. Our real-world data collection platform is illustrated in Fig.8. Before initiating the data collection process, we performed camera calibration to establish the transformation relationship between the robot base coordinate system and the camera coordinate system. During the data collection process, it is crucial to ensure that the robot and the host are on the same local network. The robot’s movement is controlled using the laptop keyboard through the ROS communication mechanism. RGB and depth information is captured through the Astro Pro Plus camera, while the pose information is obtained from IMU and the robot’s velocity encoder. These sensor data are published as ROS topics. Subsequently, ROS message filter[5] is utilized to synchronize the three different types of sensor data, ensuring they are roughly at the same timestamp.

\

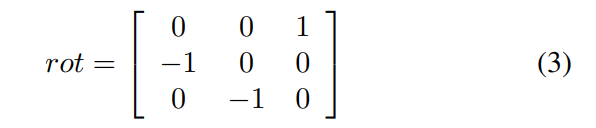

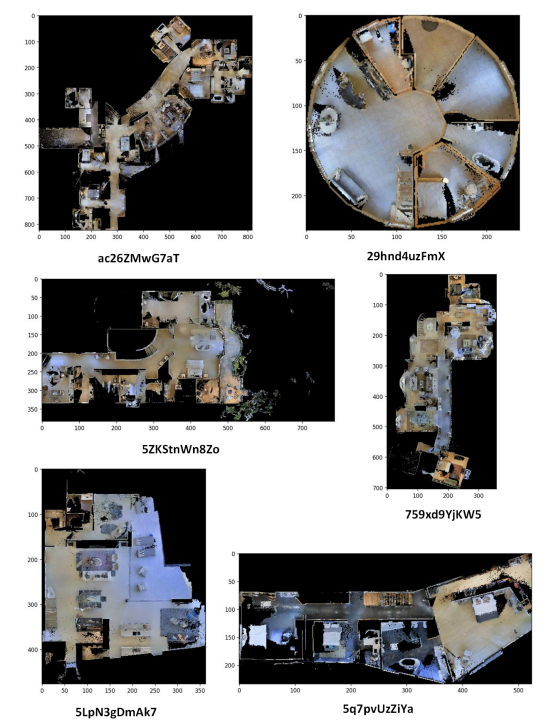

In the real-world environment, where only odometer information reflecting the pose changes of the robot car is available, a coordinate transformation between the ROSRobot base coordinate system and the Camera coordinate system is necessary(Fig.9). The rotational relationship between the robot coordinate system and the camera coordinate system can be expressed using the rotation matrix shown in Equation.3.

\

\

\

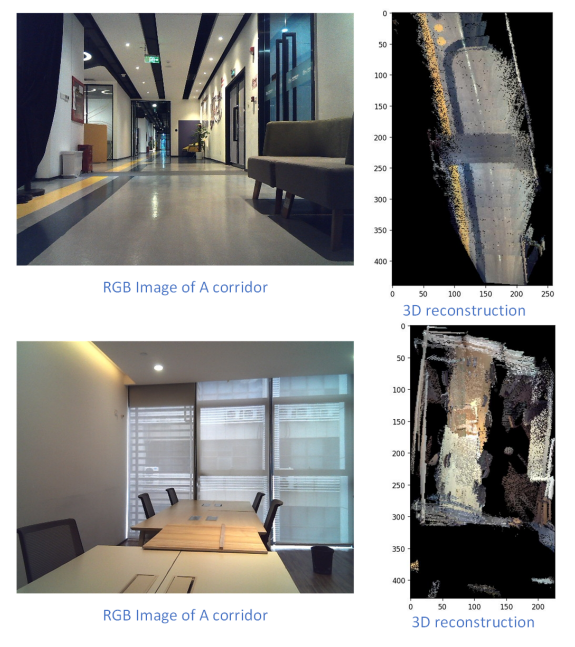

\ D. 3D Reconstruction in Bird’s-Eye View

\ 3D Reconstruction Bird’s-Eye View of Different Scenes in the Dataset Collected with cmu-exploration can be refered at Fig. 11.

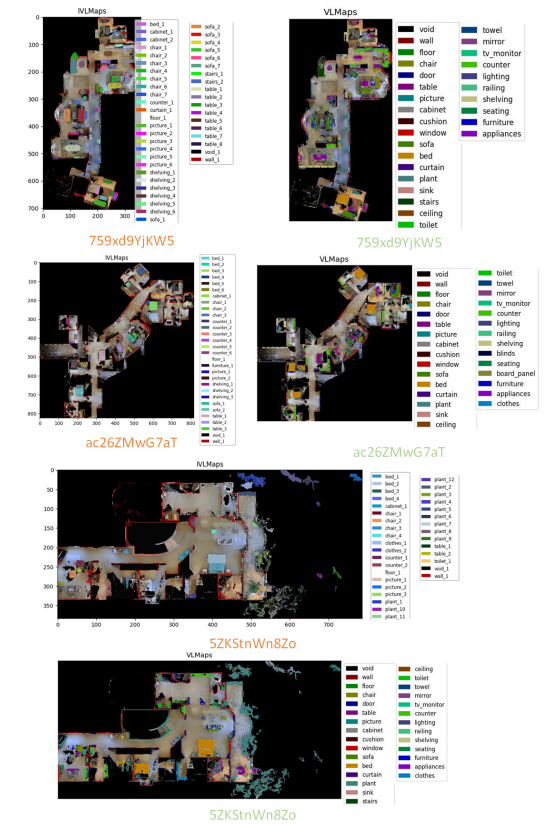

\ E. IVLMap segmentation results

\ To compare the semantic maps between IVLMap (ours, depicted in orange) and VLMap (ground truth, represented in green), refer to the visual representation of the maps for a comprehensive understanding of the differences and similarities Fig.12.

\

\

\

:::info Authors:

(1) Jiacui Huang, Senior, IEEE;

(2) Hongtao Zhang, Senior, IEEE;

(3) Mingbo Zhao, Senior, IEEE;

(4) Wu Zhou, Senior, IEEE.

:::

:::info This paper is available on arxiv under CC by 4.0 Deed (Attribution 4.0 International) license.

:::

[5]ROS message_filter is a library in the Robot Operating System (ROS) that provides tools for synchronizing and filtering messages from multiple topics in a flexible and efficient manner.

You May Also Like

Bitcoin Price Shoots to $72,000 After US-Iran Ceasefire, Is Bear Market Over?

Argentine Banks Test JPMorgan’s JPM Coin for Faster Settlement Workflows