Building a Distributed Timer Service at Scale: Handling 100K Timers Per Second

TL;DR

We built a distributed timer service capable of handling 100,000 timer creations per second with high precision and at least once delivery guarantees. The architecture separates concerns between a stateless Timer Service API (for CRUD operations) and horizontally scalable Timer Processors (for expiration handling). Workers scan their partitions for soon-to-expire timers (2-3 minute look-ahead window), load them into in-memory data structures for precise firing, then publish notifications to Kafka. ZooKeeper coordinates partition ownership among workers, preventing duplicate processing through ephemeral nodes and automatic rebalancing. DynamoDB provides the storage layer with a clever GSI design using time-bucketing and worker assignment for efficient scanning. Key innovations include temporal partitioning via time buckets, a two-stage scan-and-fire mechanism, ZooKeeper-based coordination, checkpoint-based recovery, and at-least-once delivery semantics.

Tech Stack: DynamoDB, Kafka, ZooKeeper

\

The Problem: Why We Need a Generic Timer Service

In today's microservices landscape, countless applications need to schedule delayed actions: sending reminder emails, expiring user sessions, triggering scheduled workflows, or managing SLA-based notifications. Yet despite this universal need, most teams either build bespoke solutions or rely on heavyweight job schedulers that aren't optimized for high-throughput timer management.

What if we could build a generic, horizontally scalable timer service that handles 100,000 timer creations per second while maintaining high precision and reliability? Let's dive into the architecture.

\

Functional Requirements

Our timer service needs to support four core operations:

-

Create Timer: Allow users to schedule a timer with custom expiration times and notification metadata

-

Retrieve Timer: Query existing timer details by ID

-

Delete Timer: Cancel timers before they fire

-

Notify on Expiration: Reliably deliver notifications when timers expire

\

Non-Functional Requirements

The real challenge lies in the non-functional requirements:

-

High Throughput: Support ~100,000 timer creations per second

-

Precision: Maintain accuracy in timer expiration (minimize drift)

-

Scalability: Handle burst scenarios where thousands of timers fire simultaneously

-

Availability: Ensure the timer creation service remains highly available

\

Architecture Overview

The system consists of four main components working in concert:

\

1. Timer Service (API Layer)

The Timer Service exposes a RESTful API for timer management:

Create Timer

POST /createTimer { "UserDrivenTimerID": "user-defined-id", "Namespace": "payment-reminders", "timerExpiration": "2025-11-10T18:00:00Z", "notificationChannelMetadata": { "topic": "payment-notifications", "context": {"orderId": "12345"} } }

Retrieve Timer

GET /timer?timerId=<system-generated-id>

Delete Timer

DELETE /timer?timerId=<system-generated-id>

The API layer sits behind a load balancer, distributing requests across multiple service instances for horizontal scalability.

2. Database Layer (DynamoDB)

We use DynamoDB for its ability to handle high write throughput with predictable performance. The table is structured for our access patterns:

Timers Table

Primary Key: namespace:UserDrivenTimerID:uuid

This composite key ensures even distribution across partitions while allowing user-defined identifiers.

Key Attributes:

expiration_timestamp: Human-readable expiration timetime_bucket: Temporal partitioning for efficient scanningworkerId: Worker assignment for load distributionMessageMetadata: JSON containing Kafka topic and context data

Global Secondary Index (GSI): timers_scan_gsi

- Partition Key:

time_bucket:workerId - Sort Key:

expiration_timestamp

This GSI is the secret sauce enabling efficient timer scanning. By combining time buckets with worker IDs, we achieve:

- Temporal partitioning (preventing hot partitions)

- Worker-level isolation (each processor scans its assigned partition)

- Ordered retri

Checkpoint Table

Primary Key: worker_id

Each timer processor maintains a checkpoint containing:

{ "time_bucket": "2025-11-10-18", "expiration_time": "2025-11-10T18:30:45Z" }

This enables crash recovery and prevents duplicate processing.

3. ZooKeeper (Coordination Layer)

Before processors can scan partitions, they need to coordinate who owns what. This is where ZooKeeper comes in.

ZooKeeper manages partition ownership to ensure each partition is processed by exactly one worker at any time, preventing duplicate processing and wasted resources.

How it works:

- Worker Registration: When a Timer Processor starts, it registers itself as an ephemeral node in ZooKeeper (e.g.,

/workers/worker-1) - Partition Assignment: Workers watch the

/workerspath and participate in partition rebalancing when:

- A new worker joins (scale up)

- A worker crashes (ephemeral node disappears)

- A worker gracefully shuts down

- Leader Election: ZooKeeper handles leader election for partition assignment coordination

- Ownership Tracking: Each worker maintains a lock on its assigned partitions (e.g.,

/partitions/partition-5/owner → worker-2)

Rebalancing Example:

Initial: 10 partitions, 2 workers - Worker-1: partitions [0,1,2,3,4] - Worker-2: partitions [5,6,7,8,9] Worker-3 joins → Rebalance triggered - Worker-1: partitions [0,1,2,3] - Worker-2: partitions [4,5,6] - Worker-3: partitions [7,8,9]

Benefits:

- No duplicate work: Only one worker processes each partition

- Automatic failover: If a worker crashes, its partitions are reassigned

- Dynamic scaling: Add/remove workers without downtime

- Consistent view: All workers see the same partition assignments

4. Timer Processors (Consumer Workers)

Timer processors are the workhorses of the system. Each processor follows a two-stage approach: scan and schedule, then fire and notify.

Stage 1: Scan and Schedule (every 30-60 seconds)

- Claims partitions via ZooKeeper coordination (ensuring exclusive ownership)

- Scans for soon-to-expire timers using the GSI, looking ahead by a configurable window (typically 2-3 minutes):

// DynamoDB Query using the timers_scan_gsi { TableName: "Timers", IndexName: "timers_scan_gsi", KeyConditionExpression: "time_bucket_worker = :tbw AND expiration_timestamp BETWEEN :checkpoint AND :lookahead", ExpressionAttributeValues: { ":tbw": "2025-11-10-18:worker-1", ":checkpoint": last_checkpoint_time, // e.g., "2025-11-10T18:42:00Z" ":lookahead": current_time + 3_minutes // e.g., "2025-11-10T18:48:00Z" } }

- Creates in-memory timers for each retrieved timer using a data structure like a priority queue or timing wheel:

InMemoryTimer { timerId: "abc-123" expirationTime: "2025-11-10T18:45:30Z" messageMeta {...} }

- Updates checkpoint to track scan progress, preventing re-processing of the same timers

Stage 2: Fire and Notify (continuous)

- Monitors in-memory timers - When a timer expires:

- Extract the notification metadata

- Publish message to Kafka with the configured topic and context

- Mark timer for deletion

- Deletes processed timers from DynamoDB (asynchronously, in batches for efficiency)

- Maintains heartbeats with ZooKeeper to retain partition ownership

At-Least-Once Delivery Guarantee

The system guarantees at-least-once delivery through several mechanisms:

- Checkpoint lag: Checkpoints are updated after creating notification. If a worker crashes after notifying, the next scan will re-fetch those timers.

- Timer deletion delay: Timers are deleted from DynamoDB only after successful Kafka publish, but the deletion happens asynchronously

- Kafka durability: Messages are persisted in Kafka before acknowledgment

- Retry on failure: If Kafka publish fails, the timer remains in memory for retry

Example Timeline:

T+0s: Scan finds timer expiring at T+120s T+0s: Create in-memory timer, update checkpoint T+120s: In-memory timer fires T+120s: Publish to Kafka T+121s: Async delete from DynamoDB (batch)

If the worker crashes at T+90s, the replacement worker will:

- Read checkpoint (T+0s)

- Re-scan the partition

- Re-fetch the same timer (it's still in DynamoDB)

- Create a new in-memory timer

- Fire it at T+120s (might be slightly delayed due to crash recovery)

The processors run continuously, scanning their assigned partitions at regular intervals (30-60 seconds) while the in-memory timers fire with millisecond precision.

5. Kafka + Consumer Layer

Processed timers are published to Kafka topics, where user-owned consumers can subscribe and handle notifications according to their business logic. This decoupling provides:

-

Flexibility: Users define their own notification handlers

-

Reliability: Kafka's durability ensures messages aren't lost

-

Scalability: Consumer groups can scale independently

\

Design Deep Dive

Separation of Concerns

The architecture deliberately separates the Timer Service (write path) from Timer Processors (read/process path). This separation enables:

- Independent scaling: Scale writers during creation bursts, scale processors during expiration bursts

- Availability isolation: Timer creation remains available even if processors face issues (notifications may be delayed but timers are persisted)

- Operational flexibility: Deploy, upgrade, and maintain components independently

Time Bucketing Strategy

Time buckets are crucial for managing scan efficiency. Consider bucketing by hour:

- Timer expiring at

2025-11-10T18:45:00Z→ bucket2025-11-10-18 - Timer expiring at

2025-11-10T19:15:00Z→ bucket2025-11-10-19

Benefits:

- Limited scan scope: Processors only scan current and near-future buckets

- Predictable load: Each bucket's size is bounded by timers created for that hour

- Easy archival: Old buckets can be archived or deleted

Worker Assignment and Load Distribution

The combination of workerId field and ZooKeeper coordination enables robust horizontal scaling:

During Timer Creation:

- Timer Service assigns a worker using consistent hashing:

workerId = hash(namespace:UserDrivenTimerID) % worker_count - This ensures even distribution across workers

- The

workerIdis stored with the timer for routing

During Timer Processing:

- Workers register with ZooKeeper and participate in partition assignment

- Each worker claims ownership of specific partition ranges via ZooKeeper locks

- Workers only scan partitions they own in the GSI:

time_bucket:workerId - ZooKeeper ensures no two workers process the same partition simultaneously

Preventing Duplicate Work:

- Ephemeral nodes: When a worker crashes, its ZooKeeper node disappears

- Automatic rebalancing: Remaining workers redistribute the orphaned partitions

- Graceful shutdown: Workers release partitions before terminating\

This design eliminates race conditions and ensures exactly-once processing per timer.

Handling Scale

100K writes/second across DynamoDB:

- With 1KB average timer size, that's ~100MB/s

- DynamoDB's WCU (Write Capacity Units) can easily handle this with proper partitioning

- The composite partition key ensures writes distribute evenly

Simultaneous expiration handling:

- Workers scan ahead and load timers into memory before expiration

- In-memory data structures (priority queues/timing wheels) fire timers with high precision

- Multiple processors work in parallel on different partitions

- Each processor can handle thousands of in-memory timers concurrently

- Kafka provides the backpressure management for downstream consumers

Memory considerations:

- With a 3-minute look-ahead window at 100K creates/sec: ~18M timers in memory across all workers

- At 1KB per timer: ~18GB total memory footprint

- Distributed across 10 workers: ~1.8GB per worker (manageable)

- Can adjust look-ahead window based on memory constraints

At-least-once delivery impact:

-

Duplicate notifications are rare (only on worker crashes during the look-ahead window)

-

Consumers can implement idempotency using timer IDs

\

Trade-offs and Considerations

Eventual Consistency

There's a small window between timer creation and processor visibility (DynamoDB GSI replication lag, typically milliseconds). For most use cases, this is acceptable.

Precision vs. Throughput

The two-stage approach (scan → in-memory → fire) creates interesting trade-offs:

Scan Interval (30-60 seconds):

- Determines how quickly new timers become visible to processors

- Longer intervals → lower database load, higher risk of missing timers if workers crash

- Shorter intervals → more database queries, faster recovery from failures

Look-ahead Window (2-3 minutes):

- Too short → risk of missing timers if scan is delayed

- Too long → more in-memory timers, higher memory usage

- Balances memory footprint with reliability

In-Memory Timer Precision:

-

Once loaded in memory, timers fire with millisecond precision

-

Uses efficient data structures (timing wheels or priority queues)

-

End-to-end latency: database polling interval + Kafka publish time

\

Alternative Approaches

Why Not Redis with Sorted Sets?

Redis with sorted sets (using expiration timestamp as score) is a popular alternative. However:

- Memory constraints limit scale

- Persistence and durability require careful configuration

Why Not Kafka with Timestamp-based Topics?

Using Kafka's timestamp-based retention is interesting but:

-

Requires custom consumer logic for time-based processing

-

Doesn't support easy retrieval and deletion of pending timers

-

Retention policies may conflict with timer expiration times

\

Conclusion

Building a distributed timer service that handles 100,000 operations per second requires careful consideration of data modeling, partitioning strategies, and component separation. By leveraging DynamoDB's scalability, implementing smart time-bucketing, and separating concerns between creation and processing, we can build a robust, horizontally scalable timer service.

The architecture described here provides a solid foundation that can be adapted to various use cases: from simple reminder systems to complex workflow orchestration engines. The key is understanding your specific requirements around precision, throughput, and consistency, then tuning the system accordingly.

What timer-based challenges are you solving in your systems? How would you extend this architecture for your use case?

\

You May Also Like

Teacher accuses MAGA superintendent of working with Libs of TikTok to destroy his career

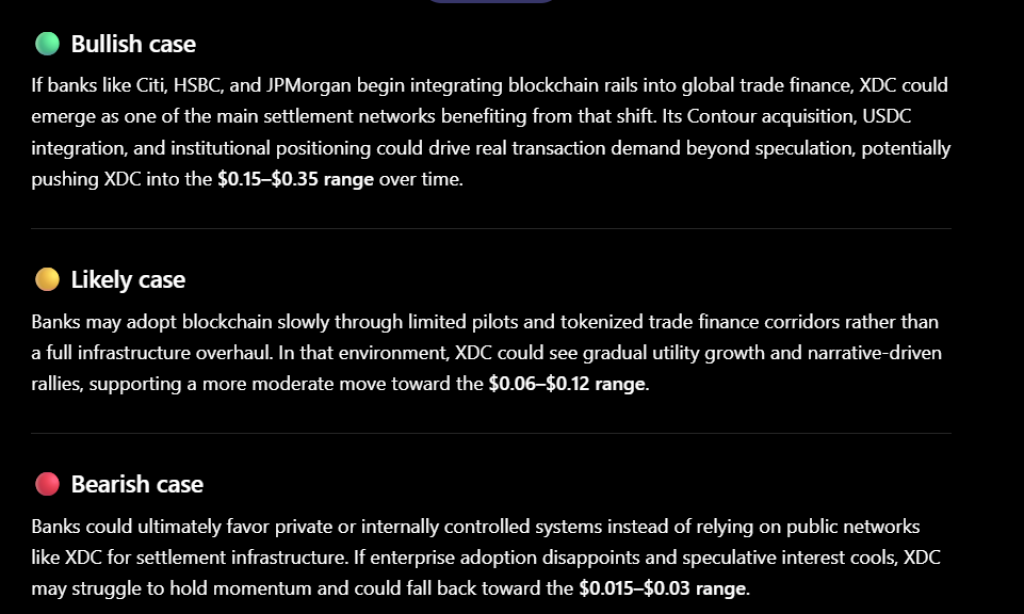

ChatGPT Predicts XDC Network Price if Banks Finally Upgrade the “Plumbing” Behind Global Trade Finance