Leonardo quantum strategy pushes energy-efficient AI and sensing

The Leonardo quantum roadmap brings together advanced computing, AI and photonics to cut energy use while unlocking new defense and space applications.

How is Leonardo using quantum technologies to reshape its digital strategy?

Carlo Rovelli recalls in Helgoland how Werner Heisenberg, in the summer of 1925 on the island of Helgoland, overturned Newton’s orderly universe by founding quantum mechanics.

A century later, that revolution still shapes today’s race for technological power. However, its practical applications remain complex and highly strategic.

The realm of the quantum continues to hide parts of reality behind what Rovelli calls the veil of the Maya. Theoretical physics now describes many subatomic phenomena that underpin life and matter. Yet transforming these insights into robust technologies is still a global challenge, increasingly tied to geopolitical competition.

The contest for so-called quantum supremacy has already started and has clear political and economic implications. Moreover, the competition is no longer limited to the US, China and Russia. Also Italy is on the field, leveraging its industrial and scientific ecosystem to build future capabilities.

Where does quantum fit in Leonardo’ Industrial Plan?

Quantum technologies sit at the core of Leonardo’s digital trajectory, as outlined in the 2024 Industrial Plan alongside AI, supercomputing, cloud, big data and cybersecurity. According to Massimiliano Dispenza, Head of Quantum Technologies, Optronics & Advanced Materials Labs, this step consolidates the company’s role as a high technology player.

Dispenza stresses that this evolution is not a leap into the void but part of a long-term innovation path. Leonardo aims to explore and integrate the most advanced frontiers of research. That said, the group is already experimenting with the progressive integration of quantum capabilities into defense, security and space systems.

For Dispenza, holding ground in quantum today translates into technological sovereignty tomorrow. It also secures competitive advantage and future leadership. Moreover, he frames this continuity of vision as the real strength of Leonardo’s longE28093range strategy, in which leonardo quantum initiatives are a central pillar.

How is Leonardo approaching quantum computing compared to traditional supercomputing?

The first major game changer is quantum computing, expected to outperform classical supercomputing on specific problems. It is the focus of Leonardo’s Center of Excellence on Advanced Cognitive Solutions. Here, a dedicated team works on technologies that could radically transform data processing.

Daniele Dragoni, Head of Quantum Computing Solutions & Head of Sales Engineering Hypercomputing, explains that the group has spent the last five years developing potentially disruptive solutions. On the wider market, various players are advancing quickly, but a true breakthrough has yet to materialize. However, Leonardo has opted for a pragmatic, use-case driven path.

The company leaves the development of quantum hardware platforms to specialized vendors and concentrates on algorithms and practical applications. This choice calls for a hybrid strategy that also leverages traditional high performance computing. In this domain, Leonardo already holds a leading position thanks to the DaVinci supercomputer installed in Genoa, as confirmed by official company data on davinci performance.

Can quantum help build more energy-efficient AI models?

The Center of Excellence is also targeting energy-efficient AI models that match the effectiveness of current systems while consuming less power. The goal is to reach similar performance using resources closer to those of traditional PCs. Moreover, researchers are pushing into advanced optimization tasks.

In particular, the team focuses on combinatorial optimization, which involves making decisions in critical situations in the shortest possible time with an error margin close to zero. Such capabilities are essential in defense, logistics and real-time mission planning. That said, the same tools could support complex financial or industrial problems.

This work connects quantum algorithms, classical supercomputing and AI under a unified framework. While full scale quantum machines are not yet widespread, hybrid approaches already allow experimentation. External studies on Leonardo-s high performance infrastructure highlight how this integration accelerates research cycles.

What are Leonardo’s projects beyond quantum hyper-computing?

Beyond quantum hyper computing, Leonardo is developing a second family of applications in its Quantum Technologies Labs in Rome, part of the broader Leonardo Innovation Labs network. Around twenty researchers work with Dispenza on photonics and sensing. However, their goal is not simply incremental improvement, but entirely new capabilities.

These teams are designing systems that can see objects hidden from direct view, behind obstacles or through smoke and fog. They are also studying quantum based navigation solutions that could offer precise positioning without satellites, a key capability in contested environments. This work could reshape both civilian and military operations.

To achieve this, researchers observe single photons and track their behavior in space and time. By analysing their trajectories, they can reconstruct the surrounding environment without direct line sight.

Moreover, such approaches may enable the safe inspection of dangerous areas or early detection of obstacles for autonomous vehicles, in line with broader advances in quantum sensing.

How does photon-based sensing enable vision through fog and smoke?

Dispenza notes that the same principles allow systems to through fog using quantum correlation. In these setups, two or more photons are linked so that measuring the first instantaneously influences the properties of the other, regardless of distance. This is one of the most striking quantum phenomena.

Such correlation underpins advanced photon based sensing architectures that could outperform classical lidar in low visibility conditions. Moreover, the ability to work with ultra weak light returns may prove decisive for covert surveillance and resilient mobility.

Within the broader leonardo quantum roadmap, these technologies complement work on computing and AI.

In summary, Leonardo is weaving together quantum computing, photon-based sensing and AI into a coherent strategy that spans the 2024 horizon and beyond.

If successful, this integrated approach could deliver more efficient computation, new navigation methods without satellites and enhanced environmental awareness across defense, space and civil sectors.

You May Also Like

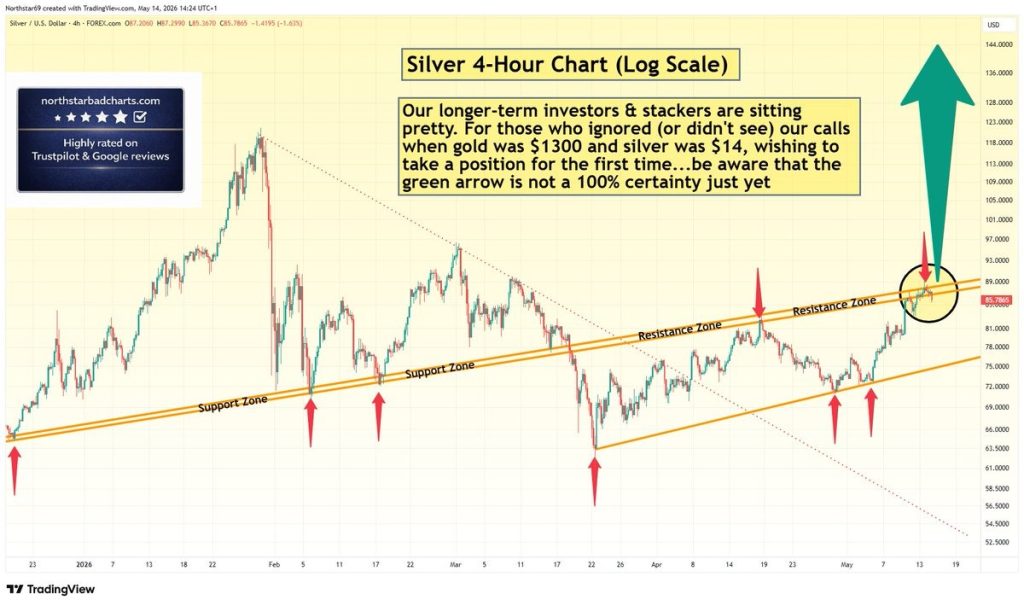

Silver Price Warning: Green Arrow Setup Is Not Confirmed – Wait for Clear Signal

Facebook spotlights African cinema in 6th ‘Made by Africa, loved by the world’ campaign