👨🏿🚀TechCabal Daily – Mono joins the Wave

Happy New Year.

The year already feels like decades are happening in a few days. Fun fact: 2026 is also the start of the second quarter of the 21st century; historically, some of the most important technology and infrastructure advances have happened in this quarter. The 20th century gave us mass electrification. AI in this quarter? Fingers crossed.

If you’re dragging yourself back to work this week, you’re not alone. Judging by the memes flying around WhatsApp, the return-from-holiday struggle has been very real and honestly hilarious.

Either way, welcome back. We’re excited to be back and can’t wait to build more engagement with you this year. Write to us anytime with feedback, thoughts, or ideas for TC Daily and look out for our newsletter in your inbox by 7 AM WAT. Quick shout-out to Martha AI, a startup creating customer support that senses emotion and responds with empathy. (This is not a sponsored shout-out; Martha AI seems like an AI wrapper that might crack this customer support automation vibe check startups keep failing.)

Let’s get started.

—Emmanuel

- Flutterwave acquires Mono

- CNN and 11 other channels will remain on DStv

- iOCO’s share buyback programme

- OADC to acquire data centre operator

- World Wide Web 3

- Job Openings

M&A

Flutterwave acquires Mono in all-stock deal

Image: Justice Flutterwave & Mono

Image: Justice Flutterwave & Mono

On Monday, Africa’s tech ecosystem woke up to major news: Africa’s largest payments startup, Flutterwave, has bought Mono, a Nigerian open-banking startup, in an all-stock deal reportedly worth between $25 million and $40 million. Mono will retain its CEO and operate independently.

Between the lines: Mono’s open-finance rails give Flutterwave deeper visibility into the data behind the payments—customer accounts, their cash flows and financial behaviour—it processes, which is strategic. Flutterwave can evolve beyond a payment processor into a financial institution capable of offering credit-related services for merchants, lenders, and enterprises, while also strengthening its core payments stack through account-to-account transfers.

The deal is reminiscent of when Mastercard acquired Finicity for $825 million to integrate Finicity’s open‑banking APIs and real‑time financial data access into its own open banking platform. After the acquisition, Mastercard now supports lending, risk scoring, identity verification, and bank payments.

The all-stock structure also tells its own story. For Flutterwave, conserving cash while using equity to absorb a complementary platform reduces balance-sheet strain and aligns long-term incentives (read: its profitability search).

Some of the online chatter has fixated on the deal valuation, noting that even the upper end of the reported price represents little more than a 2x multiple on Mono’s $15 million Series A raised in 2021. While the modest 2x return doesn’t justify venture capital scale, there’s an opportunity for early backers to get higher returns when Flutterwave lists on a stock exchange.

Mono CEO Abdulhamid Hassan told TechCrunch the business was stable and that this was not a distress sale, but rather the outcome of a strong working relationship between the two startups. The deal allows Hassan, a product guy to the core, to focus on building without the distractions of solo fundraising, while Flutterwave gains a specialised lead to scale its infrastructure as its CEO, Olugbenga “GB” Agboola continues to navigate the global market.

Importantly, the acquisition reinforces that growth-stage startups are becoming comfortable bringing in bigger, complementary players in-house rather than operating in silos, as they double down on their strengths. We saw it last year with Chowdeck and Mira—albeit at a much smaller scale—this new deal carries on that trend.

Powering African Businesses

Your 2026 demands disciplined financial operations. Fincra powers the payments infrastructure businesses rely on to collect, pay, and settle across local and major African currencies with confidence. Get started.

Streaming

CNN, Cartoon Network, others to stay on DStv after new Canal+ deal

Image Source: MultiChoice

Image Source: MultiChoice

While everybody was anticipating the end of the year to take a break from work, one uncertainty was hanging over pay-TV, particularly for DStv subscribers in Africa. In December, about 12 channels, including CNN, the global news channel, were reportedly on their way out of DStv’s catalogue by January 1, 2026. Stalled contract negotiations between MultiChoice, the owner of DStv and GOtv, and Warner Bros. Discovery drove a wedge in talks over a new deal.

However, an eleventh-hour deal changed that.

What happened? On December 31, 2025, Canal+, the French media giant and MultiChoice’s new owner, struck a new deal with CNN owner Warner Bros. Discovery, the global media conglomerate currently the subject of an acquisition tussle, to keep the twelve channels, including CNN and Cartoon Network, on DStv.

The last-minute turnaround: The new deal now gives Canal+, and by extension MultiChoice, a multi-year, multi-territory agreement to stream content on those channels across all markets where the French and South African pay-TV giants operate. The deal will allow MultiChoice to continue streaming HBO Max content—also owned by Warner Bros. Discovery—and expand its distribution. Showmax, the nimble streaming platform owned by MultiChoice, already streams HBO Max content, including popular titles like Game of Thrones.

With the renewed deal and ‘wider distribution,’ Showmax is well-positioned to deepen its HBO Max catalogue over time, and other MultiChoice-owned platforms, like DStv, could see more Warner Bros.–branded content flow through their bundles.

The agreement also brings short-term clarity amid uncertainty at Warner Bros. Discovery, which has been considering a structural reorganisation that could see its global networks business placed under a proposed Discovery Global spin-off, including CNN. While no such move has been finalised, the new deal ensures continuity for DStv subscribers regardless of how Warner Bros. Discovery ultimately restructures its business.

Companies

South Africa’s iOCO ramps up share repurchase

Image Source: iOCO

Image Source: iOCO

iOCO, a South African public company that provides software platforms for telecommunications and digital payments, has completed its share repurchase programme, buying back a further 2.34 million ordinary shares between November 29 and December 31, 2025. The shares were acquired on the open market at prices of up to R4 ($0.24), for a total outlay of R9.4 million ($574,000).

What is this ‘buy-back’ programme? A share buyback allows a company to use surplus cash to repurchase its own stock. Since launching the programme in August 2025, iOCO has bought back more than 4 million shares, which are being held as treasury shares rather than permanently cancelled. The JSE-listed company believes the shares are undervalued and that buying them back is a better use of capital than holding excess cash.

Why did it do this? The board has stressed that the repurchases do not compromise the group’s financial position. iOCO said it continues to meet solvency and liquidity requirements and retains sufficient working capital to fund operations and meet its debt obligations.

Why it matters: The buybacks come as iOCO’s turnaround gathers momentum following years of restructuring under its former EOH identity. The group returned to profitability in its 2025 financial year and is now generating strong cash flows. For retail investors, the buybacks do not deliver immediate cash returns, but they can improve earnings per share and signal growing confidence in the sustainability of the recovery.

M&A

South Africa’s data centre operator OADC secures regulatory approval to acquire NTT Data

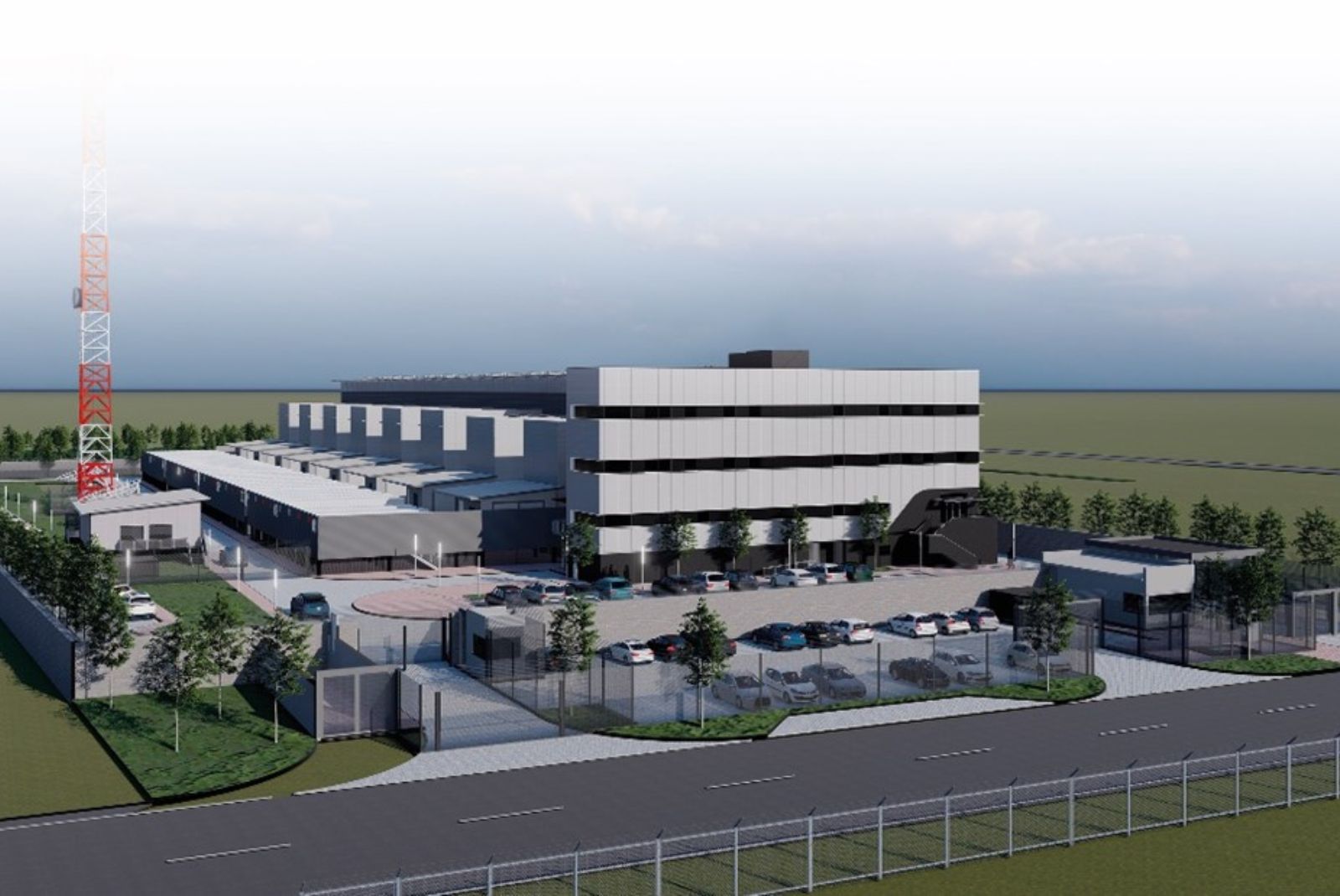

Image Source: OADC

Image Source: OADC

The South African Competition Commission has greenlit a deal for Open Access Data Centres (OADC) to acquire a portfolio of seven data centre facilities from NTT Data (formerly Dimension Data).

The acquisition includes facilities in Bloemfontein, Cape Town, East London, Gqeberha, Umhlanga, and two sites in Johannesburg (Bryanston and Parklands). Along with the physical buildings, OADC—a subsidiary of the WIOCC Group—will take over the associated infrastructure, equipment, and existing supplier agreements.

The context: OADC is already a heavy hitter in the African digital infrastructure space, with existing hubs in Johannesburg, Durban, and Cape Town. By absorbing seven new sites, OADC significantly increases its “edge” data centre capacity, allowing it to offer colocation and connectivity services in several cities where it previously lacked a physical footprint.

Between the lines: While the Commission found no competition concerns, the deal comes with a catch. To meet “public interest” requirements, OADC has committed to implementing a Historically Disadvantaged Persons (HDP) transaction as a condition of the approval.

Zoom out: This move, in addition to the group’s securing R1.1 billion ($65 million) in debt financing, reinforces the WIOCC Group’s strategy of building a converged open-access digital ecosystem. By linking these new data centres to its existing subsea and terrestrial fibre networks, OADC is positioning itself to capture the growing demand for local data storage and faster processing across sub-Saharan Africa.

CRYPTO TRACKER

The World Wide Web3

Source:

|

Coin Name |

Current Value |

Day |

Month |

|---|---|---|---|

| Bitcoin | $95,523 |

+ 1.39% |

+ 4.53% |

| Ether | $2,921 |

+ 3.41% |

– 3.06% |

| Yooldo | $0.4046 |

– 1.27% |

+ 19.84% |

| Solana | $122.63 |

– 3.70% |

– 9.65% |

* Data as of 06.45 AM WAT, January 6, 2026.

Job Openings

- Deel —Senior Risk Analyst — Remote (Nigeria)

- Migo —Growth & Product Marketing Analyst — Lagos, Nigeria

- Piggyvest —Product Marketing & Communications Lead — Lagos, Nigeria

There are more jobs on TechCabal’s job board. If you have job opportunities to share, please submit them at bit.ly/tcxjobs.

- $670m Sango Capital on exporting African tech to global markets

- How Nigeria plans to use banks and fintechs to recover tax debt

Written by: Muktar Oladunmade, Opeyemi Kareem, Emmanuel Nwosu, and Zia Yusuf

Edited by: Ganiu Oloruntade

Want more of TechCabal?

Sign up for our insightful newsletters on the business and economy of tech in Africa.

- The Next Wave: futuristic analysis of the business of tech in Africa.

- Francophone Weekly by TechCabal: insider insights and analysis of Francophone’s tech ecosystem

P:S If you’re often missing TC Daily in your inbox, check your Promotions folder and move any edition of TC Daily from “Promotions” to your “Main” or “Primary” folder and TC Daily will always come to you.

You May Also Like

CoreWeave (CRWV) Stock Surges 12% on $8.5B GPU-Backed Financing Deal — Here’s the Full Picture

Bitcoin, Gold, and U.S. Stocks Dive as Trump Pledges to Hit Iran ‘Extremely Hard’